If you look at how most production AI agent memory systems are architected, you'll find a familiar pattern: a vector database like Pinecone or Weaviate for semantic search, Elasticsearch or OpenSearch for keyword search, Redis for caching hot memories, and Postgres for structured data. Four services to deploy, four services to monitor, four services that can fail, four bills at the end of the month.

The complexity is treated as inevitable — the price you pay for capable memory. But it isn't inevitable. It's a consequence of reaching for distributed systems tools to solve a problem that doesn't require them.

ZeroClaw's memory is a single SQLite file. Here's why that's not a compromise.

The Infrastructure Trap

Vector databases are genuinely powerful tools. For a RAG system over millions of documents, or a search engine over a large corpus, they're the right choice. But AI agent memory is a different problem.

Most agents store thousands of memories, not millions. A year of daily conversations might produce 50,000 conversation turns. That's not a big data problem — it's a small data problem that gets treated as a big data problem because the tooling was designed for something else.

The cost of that mismatch is real. Pinecone starts at $70/month for production use. Weaviate requires a running server. Every memory lookup is a network round-trip — typically 10-50ms — that adds latency to every response. Your memory is stored in a proprietary format that makes migration painful. And you've added another service to your operational surface: another thing to monitor, another thing to update, another thing that can go down at 3am.

For an AI agent running on a Raspberry Pi or a $5 VPS, this architecture is simply not viable. But even for well-resourced deployments, the complexity isn't buying you anything that a simpler approach can't provide.

Why SQLite Is the Right Foundation

SQLite is the most deployed database in the world. It runs on every smartphone, every browser, every embedded device. It's been in continuous development since 2000, has an exhaustive test suite, and is used in production by companies handling billions of transactions. It is emphatically not a toy.

What makes it right for agent memory specifically is the combination of properties it offers: ACID compliance with WAL mode for concurrent reads, zero configuration (no server, no credentials, no setup), a single portable file that contains your entire memory, and performance characteristics that are hard to beat for the access patterns agents actually use.

ZeroClaw uses two SQLite extensions together to build a memory system that handles both the things agents need: exact recall and semantic understanding.

FTS5: Full-Text Search

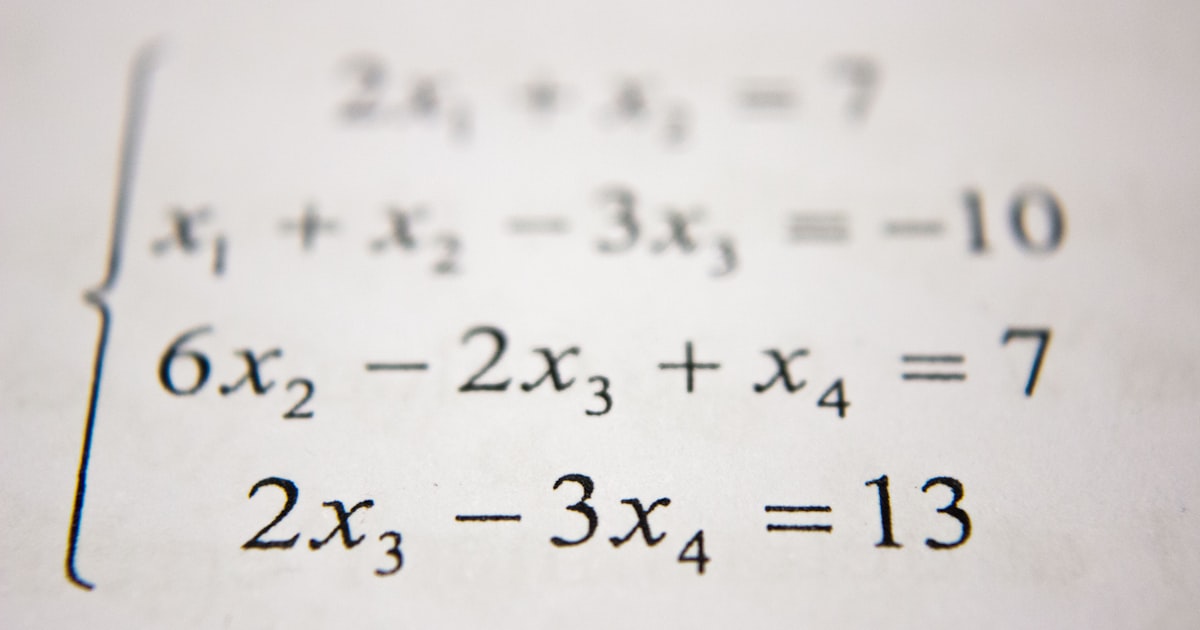

SQLite's FTS5 extension provides fast keyword search with BM25 ranking:

```sql CREATE VIRTUAL TABLE memory_fts USING fts5(content, tokenize='porter');

-- Search for memories about "Rust deployment" SELECT * FROM memory_fts WHERE memory_fts MATCH 'rust deployment' ORDER BY rank; ```

FTS5 handles tokenization, stemming, and ranking automatically. It's fast — sub-millisecond for typical agent memory sizes — and it excels at exact recall. When you need to find a specific fact, a specific name, or a specific technical term, keyword search finds it reliably.

Vector Search: Semantic Similarity

ZeroClaw stores embedding vectors alongside text and performs cosine similarity search directly in SQLite. This handles the cases where keyword search falls short: finding memories that are conceptually related even when they don't share exact words.

"My Raspberry Pi setup" matches "deploying on ARM hardware" even though they share no keywords. "The project deadline" matches "when is it due" even though the phrasing is completely different. Vector search understands meaning, not just words.

Hybrid Search: Getting Both Right

Neither approach alone is sufficient. Keyword search misses semantic connections — "car" won't find "automobile." Vector search misses exact matches — searching for "CVE-2026-25253" might return vaguely related security content instead of the specific CVE you're looking for.

ZeroClaw runs both searches in parallel and merges results using Reciprocal Rank Fusion:

``` score(doc) = 1/(k + rank_fts) + 1/(k + rank_vector) ```

Where `k` is a constant (typically 60) that controls how much weight goes to top-ranked results. Documents that rank highly in both searches bubble to the top. Documents that only match one method still appear, but lower. The result is memory retrieval that handles both "what exactly did I say about X" and "what did I say that's related to X" correctly.

The Performance Numbers

On a Raspberry Pi Zero 2 W — the most constrained hardware ZeroClaw commonly runs on — memory retrieval takes under 3ms total: roughly 0.3ms for FTS5 search, 2ms for vector search, and 0.1ms to merge the results. On x86 hardware, these numbers are sub-millisecond.

For comparison, a network round-trip to Pinecone or Weaviate typically takes 10-50ms. ZeroClaw's memory retrieval is faster than a single network packet can travel across a city.

Your Memory Is Just a File

The practical consequence of SQLite-based memory is that your entire conversation history, all your agent's learned context, everything it knows about you — lives in a single file called `memory.db`.

Backing it up is `cp memory.db memory.db.bak`. Moving it to a new machine is copying the file. Inspecting it is opening it with any SQLite client. There are no export tools, no migration scripts, no API calls to make. It's a file, and files are the most portable, most understood, most durable data format that exists.

This matters more than it might seem. When your memory is in Pinecone, it's in Pinecone's format, on Pinecone's servers, accessible through Pinecone's API. When Pinecone changes their pricing, or their API, or goes down, your memory is affected. When your memory is in a SQLite file on your machine, none of that applies.

Zero infrastructure, zero cost, zero complexity, and performance that external databases can't match because there's no network in the way. For AI agent memory, that's not a compromise — it's the right design.